Nvidia launches their largest AI GPUs ever with Blackwell

Nvidia Blackwell is a computational monster

At GTC 2024, Nvidia has revealed their “Blackwell” series of AI GPUs. Promising up to a 5x increase in computational performance over today’s Nvidia Hopper GPUs, Blackwell is an AI inferencing monster.

Nvidia’s Hopper GPUs are already selling like hotcakes, and with Blackwell Nvidia promises to deliver more to their customers. More silicon, more performance, more features, and increased flexibility.

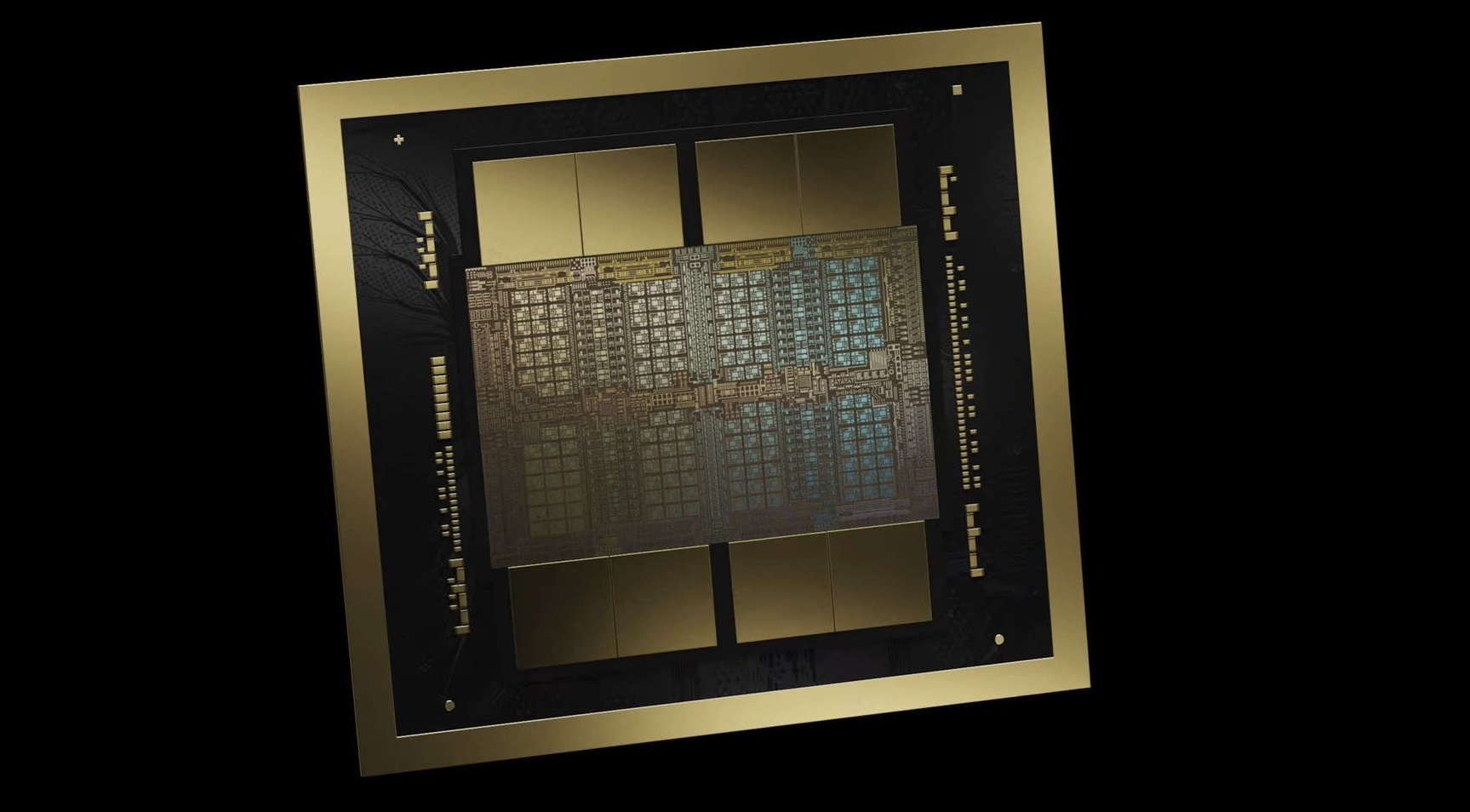

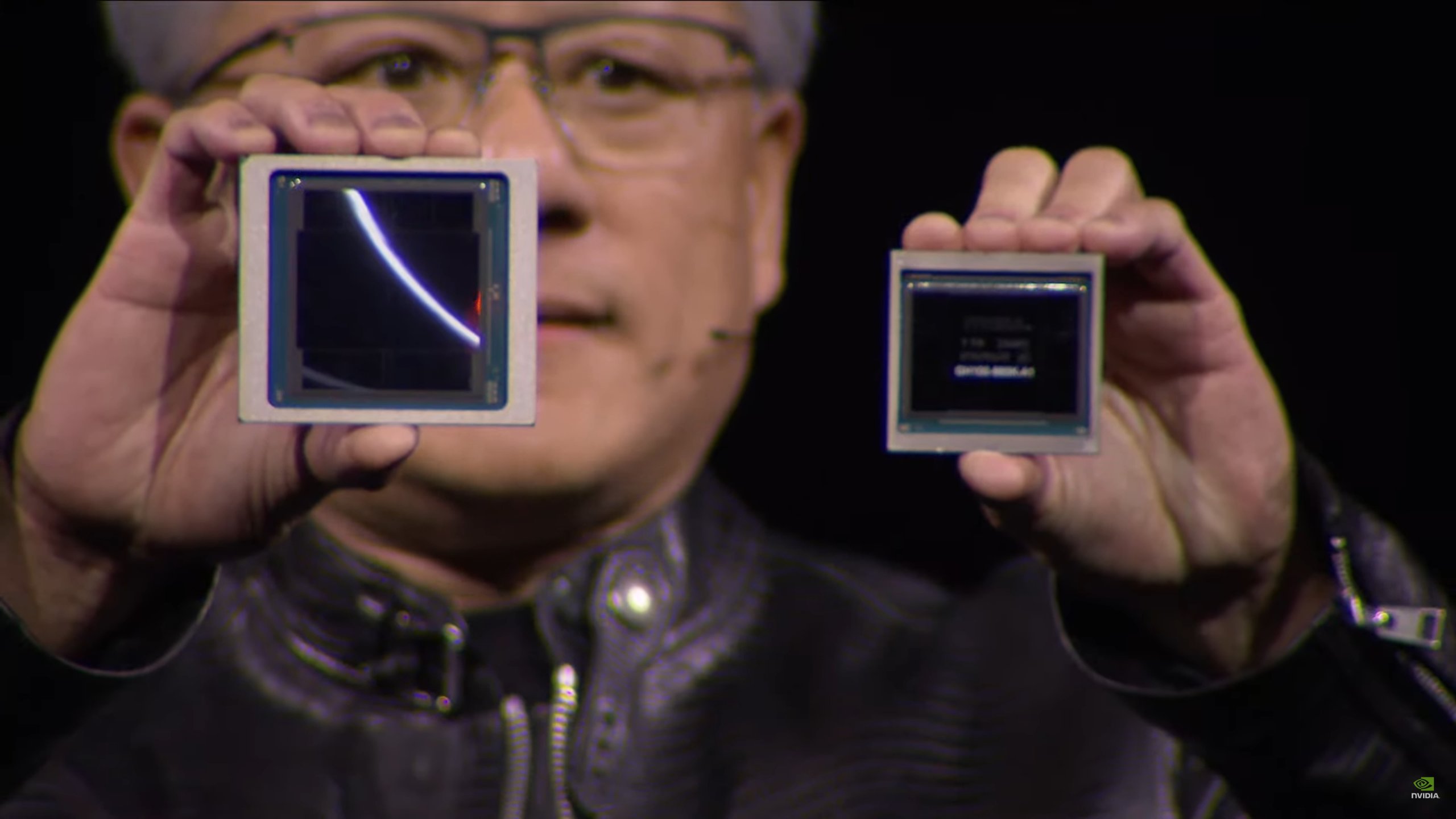

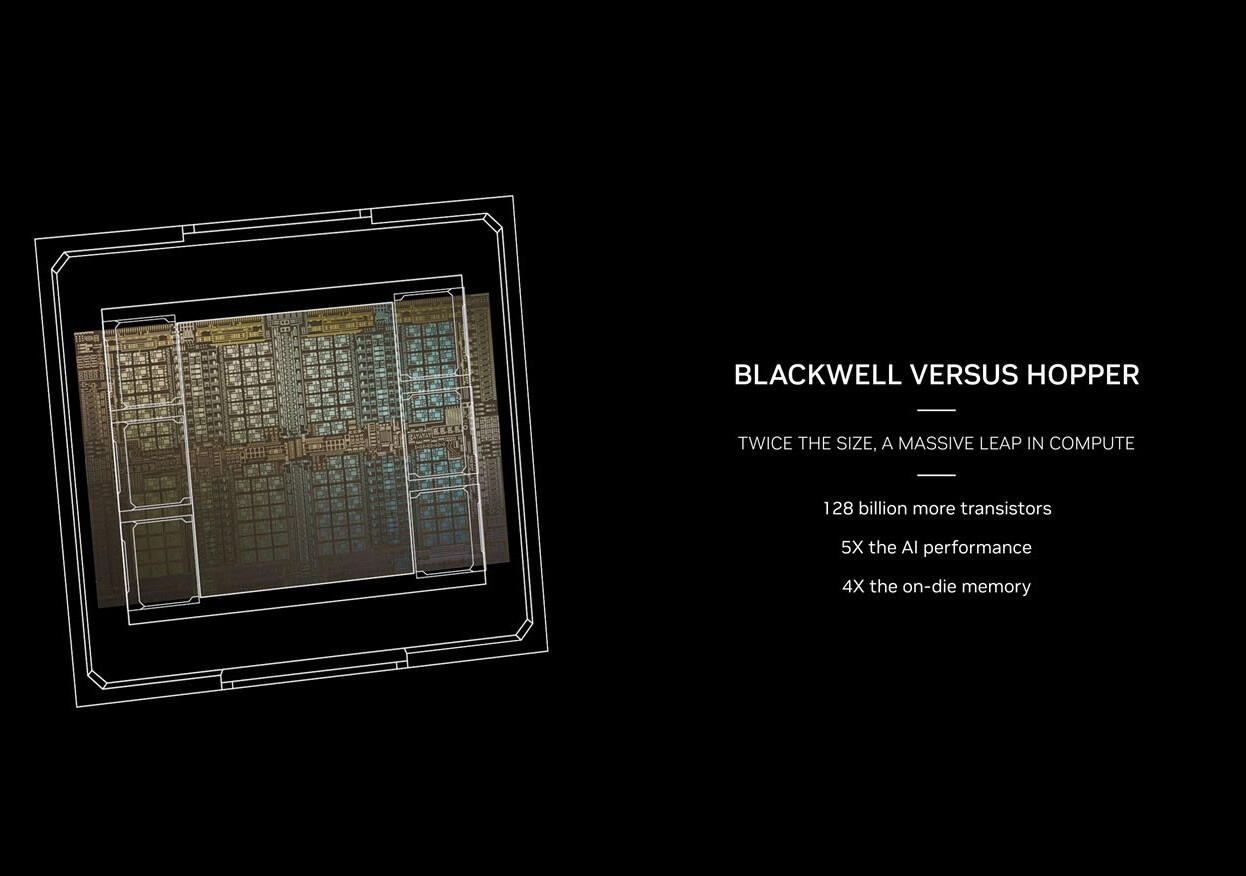

Using chiplet technology, Nvidia has managed to dramatically increase the size of their Blackwell B200 series chips. Below, you can see Nvidia Blackwell beside Nvidia Hopper, with Blackwell being double the size. This was achieved using the same TSMC 4nm technology as Hopper (albeit in its more refined 4NP form), and uses two B100 chiplets instead of a single monolithic die.

Nvidia’s two GPU chiplets are connected using a custom 10TB/s interconnect. This gives Nvidia enough bandwidth and low enough latency to maintain chip coherency. These two chips can effectively work together as a single larger GPU. This is part of the reason behind Nvidia’s huge performance gains with Blackwell.

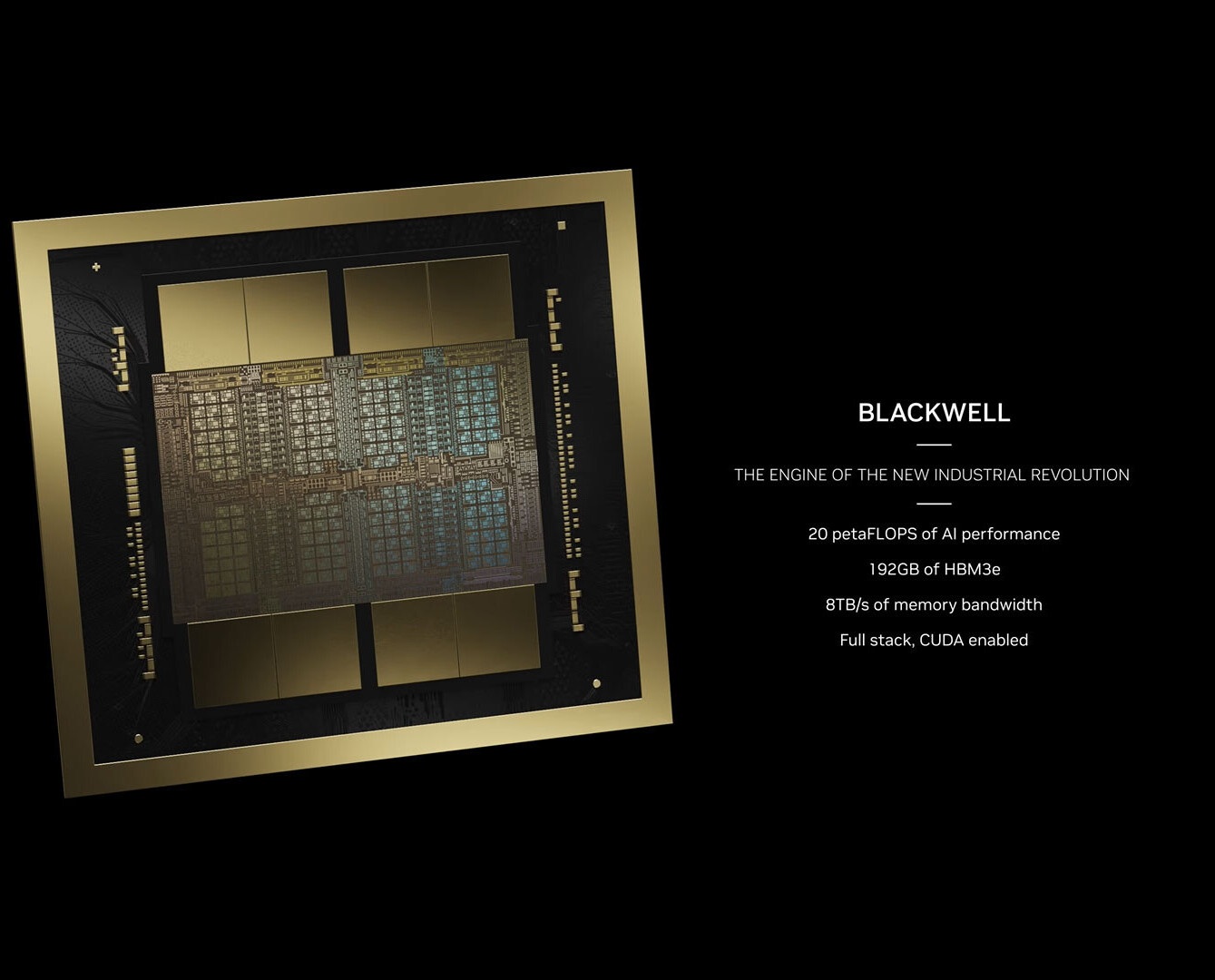

With Blackwell, Nvidia’s wired each of their Blackwell chiplets to four HBM3E memory stacks. This gives B200 a 8192-bit memory interface and 192GB of HBM3E memory. This gives Nvidia’s latest GPU the bandwidth needed to crunch the most challenging of AI workloads.

Huge bandwidth, huge compute

In total, Nvidia’s B200 chip will feature 4x as much memory bandwidth as the company’s original H100 “Hopper” chips. This is thanks to Nvidia’s use of HBM3E memory, and their use of a larger memory bus and more total HBM chips.

Thanks to the introduction of their new FP4 format, each Blackwell B200 chip can deliver up to an 5x performance increase over their Hopper H100 chip. This increase is so large because Hopper does not support FP4, and will compute this format at the same rate as FP8. When comparing FP8 to FP8, the Blackwell B200 is only 2.5x faster than Hopper H100 chips. Even so, this is a huge performance increase.

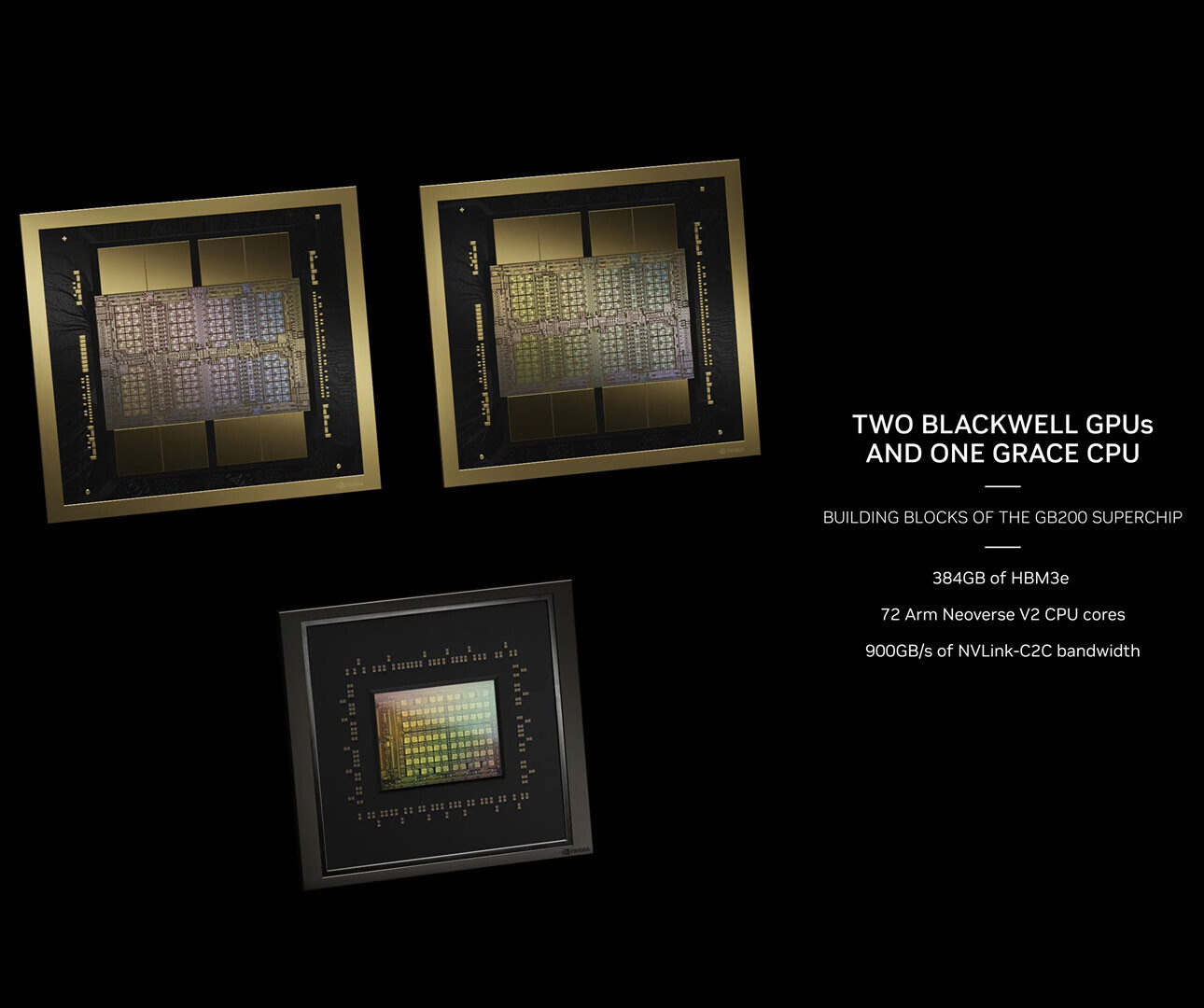

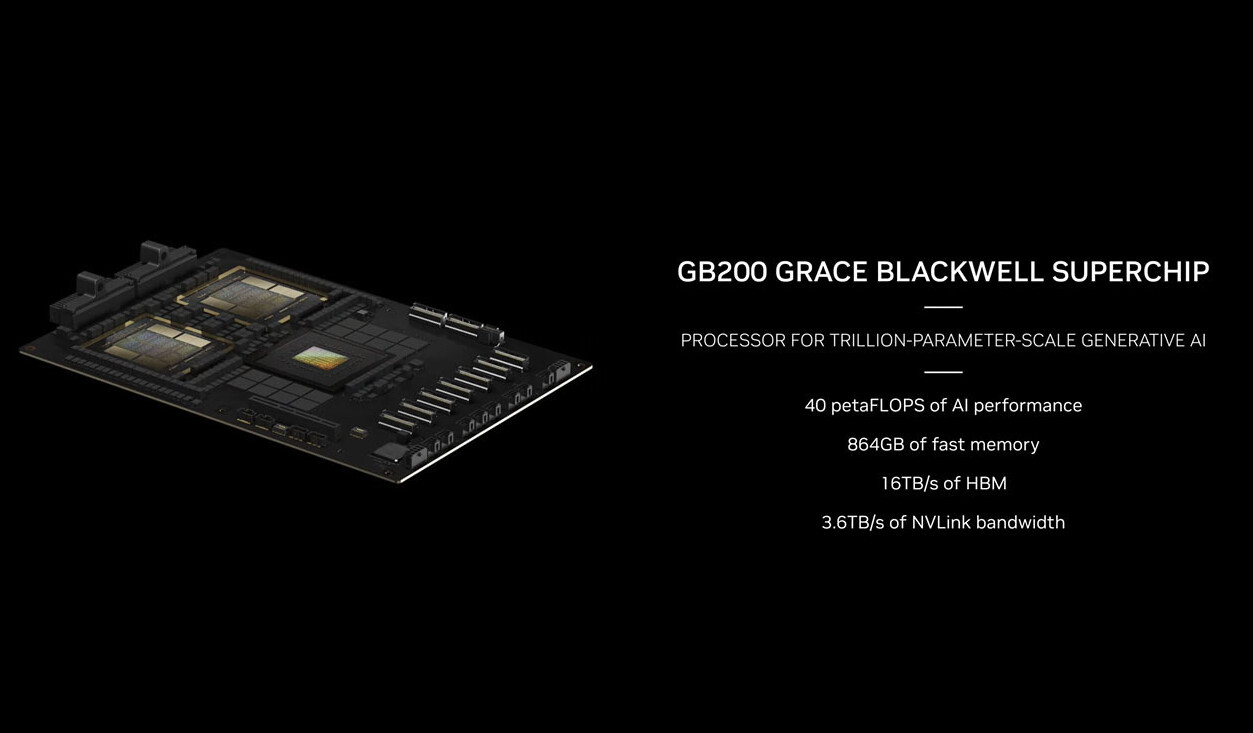

Nvidia Grace-Blackwell superchip

Nvidia has also revealed their GB200 Grace-Blackwell superchip. This design brings together a single Grace CPU and two Blackwell B200 chips. This gives this “superchip” a total of 863GB of memory (384GB of which is HBM3E), 72 ARM Neoverse V2 CPU cores, and 40 PetaFLOPS of AI performance.

Hopper has already been a huge success for Nvidia, and Blackwell should allow the company to continue riding the AI money train. Blackwell promises a huge boost in AI performance over Hopper, and Hopper is already the most popular AI chip on the planet.

Nvidia plans to ship with new Blackwell AI GPUs later this year int he form of GB200, B200, and B100 chips. B100 is simple a single Blackwell chiplet that is used as a single AI GPU. In a sense, B100 is a direct successor to H100, with B200 benefiting from a 2x increase in size thanks to its chiplet design. To put it another way, a B100 chip is half of a B200 chip.

With their B200 Blackwell announcement, Nvidia are laughing their way to the bank. Hopper was already hugely successful, and their B200/B100 chip will allow Nvidia to maintain their leadership position within the growing AI market.

You can join the discussion on Nvidia’s Blackwell accelerators on the OC3D Forums.